OpenAI ChatGPT, the Most Powerful Language Model: An Overview

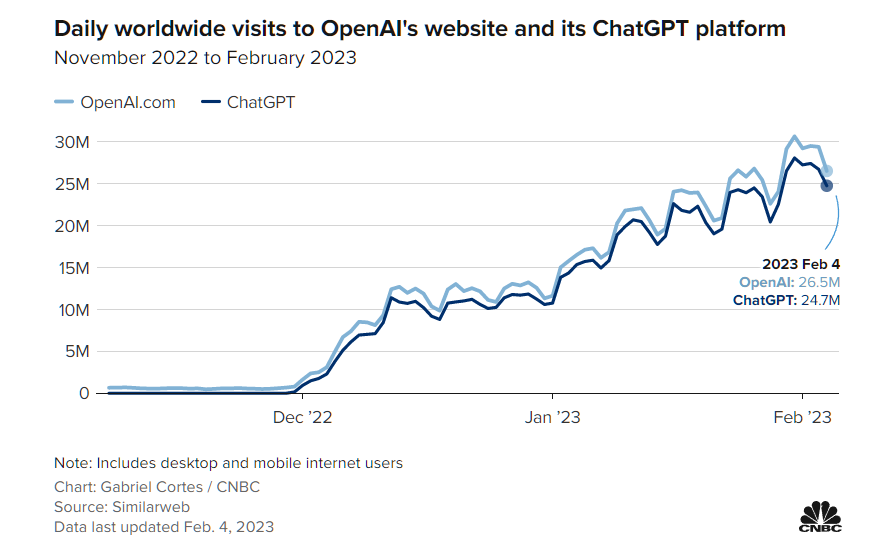

OpenAI introduced ChatGPT on November 30, 2022, and the app immediately made a splash. Within five days of its release, it had 1 million users; three months later, there were over 100 million, so its case seems to be the industry’s next big disrupter. The technology behind OpenAI ChatGPT has been around for much longer, albeit with much less publicity.

But OpenAI’s decision to make the system freely available on the Internet has opened it up to millions. And the web was filled with heated discussions about the possible impact of ChatGPT on work, education, business, and, most worryingly for Google, the future of Internet search.

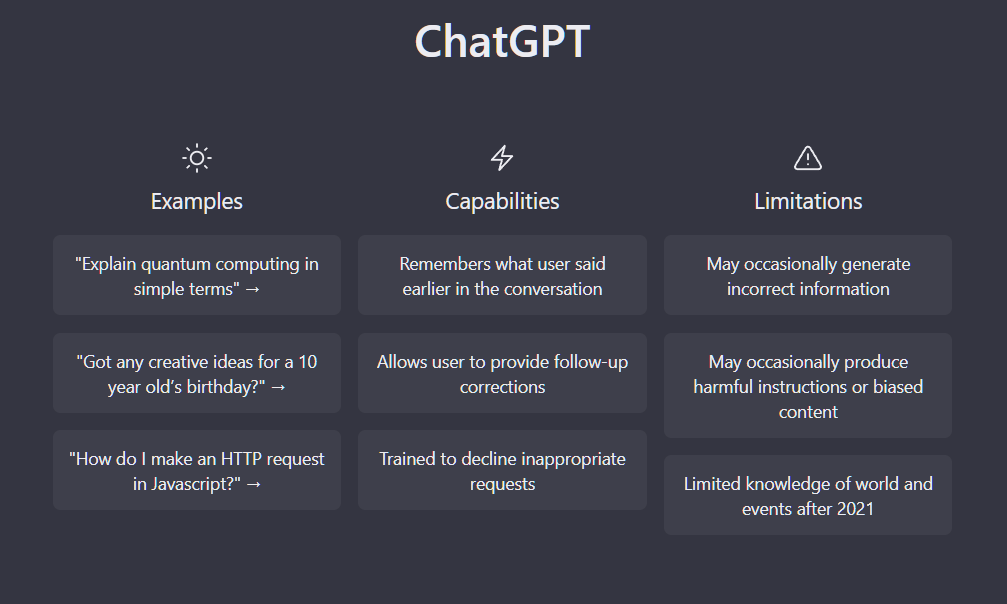

Although users from different sectors and backgrounds actively explore ChatGPT, only a few understand this tool’s technology, mechanism, and potential. In this article, we will present ChatGPT in its various aspects, such as the principles of learning and operation, use cases, and of course, limitations. But let’s start, of course, with the circumstances of ChatGPT creation.

Who Created the ChatGPT?

For those who aren’t familiar, ChatGPT is owned and developed by OpenAI – a San Francisco-based artificial intelligence research laboratory founded in 2015. Among its co-founders, in addition to Sam Altman, Ilya Sutskever, Greg Brockman, Wojciech Zaremba, and John Schulman, was Elon Musk. But he left the board of directors in 2018.

The company is committed to promoting and developing friendly AI to gain benefit for all humanity. The company’s research focuses on developing advanced machine learning models and methods, particularly deep and reinforcement learning.

Before becoming famous with ChatGPT, OpenAI developed several advanced AI models such as GPT-2, GPT-3, DALL-E, and DALL-X. These models can understand and generate text, natural language code, and realistic images, respectively. OpenAI has also developed an API for GPT-3 that can generate text, translate languages, answer questions, and more.

What is OpenAI ChatGPT, and How Does it Work?

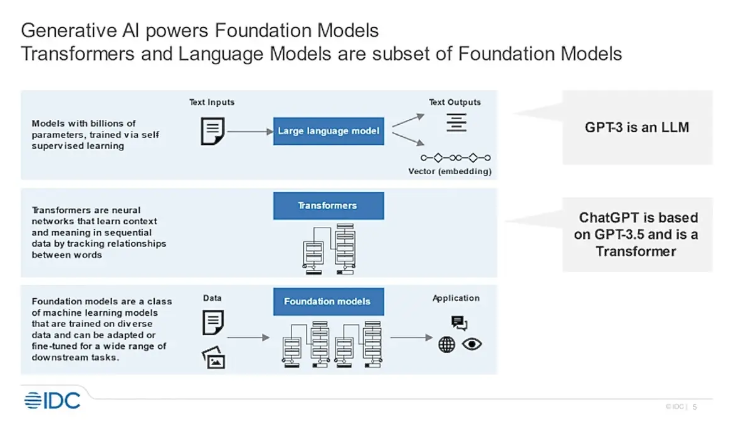

In fact, ChatGPT is not a superintelligence but one of the advanced applications of a large language model (LLM) created by OpenAI in 2018. LLM is a machine learning model focused on natural language processing (NLP – an essential sub-branch of data science). It is grounded on the GPT-3 (Generative Pre-trained Transformer) architecture and pre-trained on a huge dataset of text and then adjusted on specific tasks such as:

- text generation, completion, and summary

- language translation

- code debugging

- question answering

- part-of-speech tagging

- conversational AI

- sentiment analysis

- named entity recognition

The language model can look at conversations from one end to another and find human-like answers. That means it can understand and respond to natural language queries and follow them as effectively as a human can. But exactly how does this work? Below is a step-by-step explanation of the process:

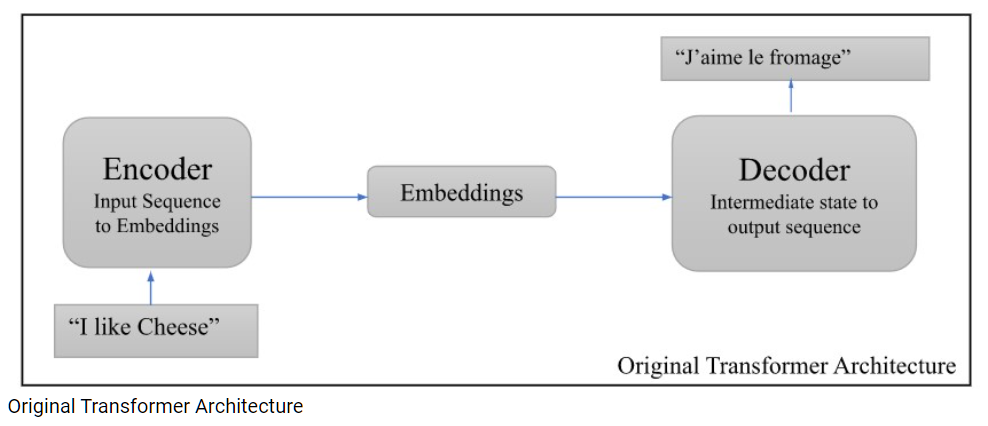

- Input processing: a human user enters commands or questions into the ChatGPT text panel.

- Tokenization: the entered text is tokenized; the program divides it into separate words for analysis.

- Input embedding: the tokenized text is placed in the transformer part of the neural network.

- Attention encoder-decoder: the transformer encodes the input text and produces a probability distribution for all feasible outputs. This distribution then generates the output.

- Text generation and output: ChatGPT generates an output response, and the human user receives a text response.

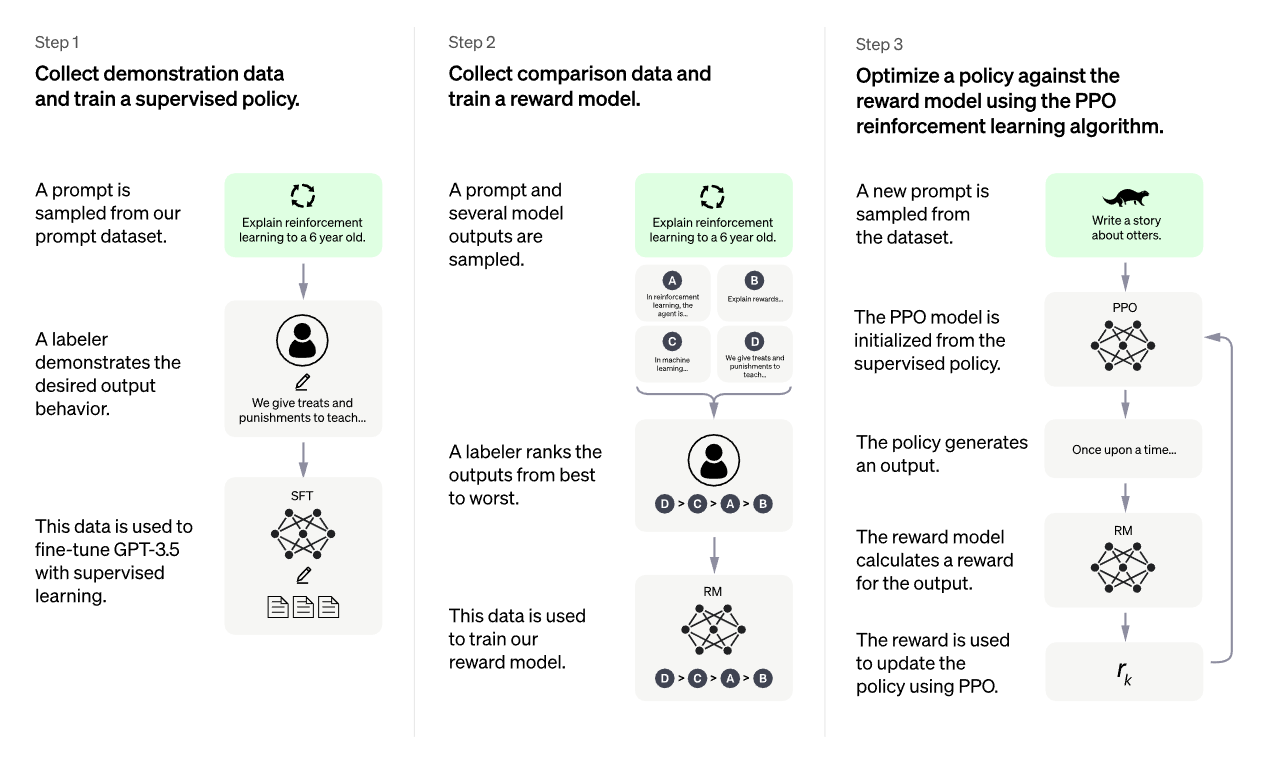

How Was ChatGPT Trained?

ChatGPT itself was not trained from scratch. It is actually a modified version of GPT-3 and GPT-3.5 models, which finished training in early 2022 by an Azure AI supercomputing infrastructure. They were pre-trained on a huge amount of data collected from numerous sources such as articles, websites, forums, and even books. The text dataset took about 45 terabytes, considered a very large dataset for training a language model. More precisely, 300 billion words were entered into the system.

Unlike AI assistants like Alexa or Siri, ChatGPT doesn’t use the Internet to find answers. After receiving data from the Internet, the chatbot discards the original data and retains the neural connections or patterns it has extracted from it. These links or patterns are like evidence that ChatGPT parses when it tries to answer a query.

It does not know for sure what the facts of the answer should be, but it tries with impressive accuracy to predict the logical sequence of a text in human language that will be the most appropriate answer. Based on its learning, it builds a sentence word by word, choosing the most likely “token” next. That is how you get answers to your questions.

Think of it as a much enhanced and smarter version of auto-complete software where you start typing a sentence, and the system gives you a suggestion of what you would say.

Potential Applications of OpenAI ChatGPT

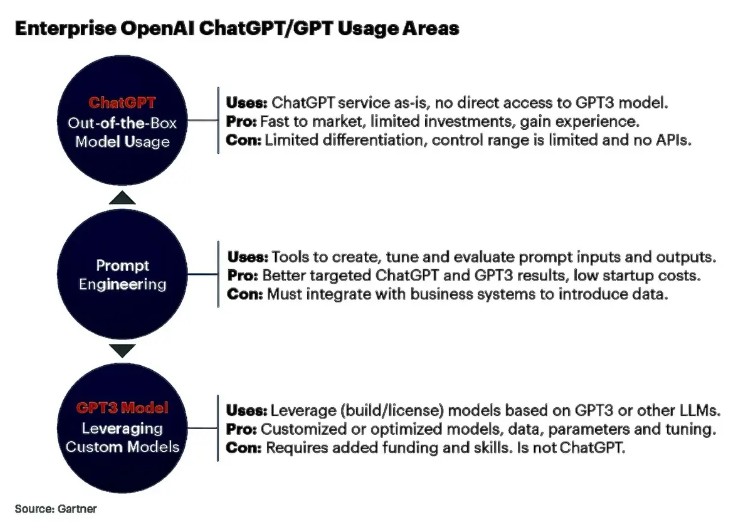

As for ChatGPT and its real application, one of the biggest OpenAI investors, Microsoft, is already using it. The first integration was in Teams Premium, with some OpenAI features exposed to automate tasks and provide transcripts.

Now that ChatGPT is available on Bing, it’s only a matter of time before ChatGPT and other OpenAI technologies are implemented in applications like PowerPoint and Word. Also, the company is not opposed to the tool being used by all other employees with the clarification, “just don’t share sensitive data with it.”

All other users can also use the features of this tool at their discretion. Let’s look at the best uses of ChatGPT and its underlying GPT-3 language model.

Research assistance

ChatGPT makes finding general information much more convenient than sifting through hundreds of Google search results looking for the information you need. It answers all your questions, like helping you plan a trip and write a resume in one place. ChatGPT showed its power by surpassing the bar exam and passing the Google coding test.

However, whether ChatGPT proves to be an ideal alternative from a practical standpoint will depend on your specific needs and preferences. For example, it might be the right choice for users who value a more intuitive and conversational search experience.

Language translation

There is one area where ChatGPT can excel, and that is machine translation. One of the greatest advantages of a chatbot is its ability to adjust translation based on context or additional information the user provides. Google Translate currently cannot do this. Also, ChatGPT provides an “interpretation” rather than just a literal translation of the spoken language, which is definitely an advantage.

Content generation

Just as a person who knows a language can guess what’s next in a text or even develop new words or concepts independently, a large language model can apply its knowledge to predict and create content. ChatGPT is suitable for writing any text, including blog posts, articles, essays, and full-length research papers.

Another extremely useful feature of ChatGPT is its ability to quickly parse unstructured texts or documents, identify key entities, classify them according to predefined categories, and even determine the subject’s general mood.

Code troubleshooting

Another amazing feature of ChatGPT is the ability to display and debug code in multiple programming languages, including JavaScript, Java, Python, C#, and more. However, the code it generates is not completely free of bugs and errors depending on the complexity of the requirements and hints given to it. Because of this, an engineer will always need to review the code it generates.

In addition, you can use it to generate SQL queries, a task previously done exclusively by data scientists. With ChatGPT, a process normally taking days to weeks will become instantaneous, as anyone can ask questions, and ChatGPT can deduce the necessary SQL to get information. For example, ChatGPT can provide sample code for a SQL query such as “find tables by city and sorted by last name.”

Limitations of OpenAI ChatGPT

However, remember that the chatbot did not think through any of its responses. It’s an AI trained to recognize patterns in vast amounts of text gathered from the Internet, then trained by humans to deliver more useful, better dialog.

The answers you get may sound believable and authoritative, but they might be wrong, as OpenAI warns. Also, while search engines display articles and news verified by trusted sources, the responses provided by the chatbot do not include the source of the information or any quotes.

The ChatGPT team does not deny other drawbacks of the model, including:

Outdated knowledge database

ChatGPT cannot answer questions about recent events because it only provides facts and data that the developers pulled from 2021. That means it cannot work if you’re looking for information based on 2022 and 2023 data.

Overuse of certain phrases

In an attempt to create complex content, ChatGPT can be repetitive and superficial. Also, it writes often with a stuffier and more pedantic tone than a writer might prefer. And there are also problems with the uniqueness of the answers – ChatGPT can provide the same text to more than one user.

Answers are not always correct

ChatGPT constantly learns, so it doesn’t give 100% accurate answers. It may fill in the gaps with incorrect details if there is insufficient data. Moreover, when the bot is overloaded with requests, wrong answers are more likely to appear. As a result, StackOverflow temporarily blocked ChatGPT, stating that the average rate of getting correct answers from ChatGPT should be higher and low-quality answers look too plausible.

Bias and ethical concerns

Unfortunately, there is a huge amount of toxic content in training data, which can negatively impact the model’s output. ChatGPT can “absorb” the prejudices of the people who train it and potentially generate sexist, racist, and other offensive things.

ChatGPT-4, the next iteration of the model, surely, will be more accurate and advanced. There is no exact release date yet, but the New York Times has reported that it will release sometime in the first quarter of 2023.

How to Use ChatGPT for Coding

ChatGPT is not the first “assistant” for coding. Software developers have also recently used other ML tools like GitHub Copilot. It suggested improvements and pointed out potential problems in the code. And it did it well enough. But it was unable to formulate detailed responses to the dialogue prompts. ChatGPT can do this, making this app stand out as a coding helper.

Although OpenAi ChatGPT can be a forceful tool for developers, it’s crucial to understand its limitations and use it properly. To be more specific, you can use it to find:

- Errors in your code. If you have a segment of code you cannot debug, you can put it in ChatGPT with information about what you want to achieve and what is happening. You’ll get awesome ChatGPT prompts to find the problem. But keep ChatGPT from fixing the code; only suggest areas to review.

- Edge cases in your code. The model has a lot of processing power, so it can generate edge cases that your code won’t work for and might be unable to figure out quickly.

- Product ideas. By asking questions about the product, you can get a list of possible uses for your software based on other products and ideas from which it has learned. It will unlikely offer something non-standard, but it can find gaps in your product compared to others.

- Architectural and infrastructure options. When evaluating various strategies for a task that you know will require infrastructure changes, it can be helpful to get ChatGPT’s perspective.

- Test cases. ChatGPT can be seen as a powerful low-code tool for writing test cases using various languages and frameworks. ChatGPT takes natural language as input, so you can write at your natural pace and still be understood, unlike pattern-based models that often rely on specific language structures or key phrases.

Now let’s discuss how best not to use it:

- Please don’t use ChatGPT to learn code. You cannot be completely sure that the generated code is correct. It may be functional, but there may be better ways to code. For example, when writing React components, it uses a common props element and does not explicitly define each property as is the industry standard. Also, it doesn’t automatically abstract similar code into a function, so it is usually too long.

- Additionally, don’t use it to generate code requiring many contexts. It’s impossible to give a model the context of an entire codebase or product, so if you still need a clear idea of how to approach a problem, ChatGPT only helps a little.

- Also, don’t use it for school or university assignments. Besides the fact that the answer may be wrong, you will not learn anything. Practice is what we need the most, so if you cheat with ChatGPT, you are simply depriving yourself of a better career.

According to a Semafor report, OpenAI is hiring hundreds of contractors around the globe to help ChatGPT improve coding. Its knowledge base includes almost the entire GitHub, and it has access to public technical documentation of all projects and products – from AWS to Linux commands. But in its current incarnation, ChatGPT is a super-powerful search engine that you can use to complement your existing coding practice, not replace it.

ChatGPT Pricing

Well, in light of its success, what is the cost of ChatGPT? In the basic version, it remains to be free. However, OpenAI offers a paid and advanced version for professionals. ChatGPT Plus costs $20 per month, offering users priority access, faster downloads, and early access to updates and new features.

But it is difficult to predict whether the free version will remain and how much ChatGPT will cost in the future. After all, OpenAI is currently spending about $3 million a month to keep ChatGPT running, which is about $100,000 per day.

But these expenses do not smudge OpenAI, which has doubled its market valuation to $29 billion and found a few investors besides Microsoft with their 10 billion dollars. OpenAI itself expects $1 billion in revenue by 2024, according to Reuters. Those levels can be increased significantly with the introduction of OpenAI’s GPT 4.

Useful Tips for Getting Started With ChatGPT

You can get started with ChatGPT by signing up for an OpenAI account for free. However, it is recommended that you read a few short disclaimers before registering. The website notifies you not to enter sensitive information. In addition, the technology can use user-generated results to improve learning algorithms.

- Register. When you register with ChatGPT, you may not go there the first time. Make new attempts every 20 minutes until you gain access.

- Start a new chat for each request. ChatGPT uses machine learning algorithms to extract information from each chat, so setting up a divided discussion for each specific purpose is recommended. Each conversation is saved in the navigation bar on the left half of the screen.

- Give clear instructions. Start by clearly defining what task or results you want from ChatGPT or what you want to achieve.

- Specify. Ask ChatGPT about responding with a summary of what you entered. If any clarifications or additions need to be made, it’s time to make them.

- Always review content and leave feedback. ChatGPT has limitations, so check all generated content before publishing or submitting anywhere. It uses machine learning algorithms to improve continually, so remember to let it know when it’s done a great job and when it’s not.

- Experiment. That is a new tool, so take the time to explore, test, and find out what works best for you. Ask questions, research, and dig deeper. When you and ChatGPT work together, the greatest results are achieved.

Bard – Chat GPT’s Biggest Competitor

Google’s core business is web search, but the company has long touted itself as an innovator in artificial intelligence. One of Google’s test products is Apprentice Bard’s chatbot, powered by LaMDA – a language model for dialogue applications available through the AI Test Kitchen app. But this version is extremely limited; it can only generate text relevant to a few queries.

While Google has deep knowledge of the AI behind ChatGPT (it was the company that invented the key technology, the converter, which is encoded with the letter “T” in GPT), the company has so far taken a more cautious approach to share its tools with developers. Therefore, unlike the early days of ChatGPT, Bard is not open to all Internet users. Google doesn’t want to compromise the technology because, like ChatGPT and similar systems, it can generate false, toxic, and biased information.

The reasons for the more restrained approach are clear. According to Google’s analysis, its technology lags behind OpenAI’s claimed performance. OpenAI outperformed Google tools in every category, which fell short of human accuracy in rating content.

A global language learning platform Preply has published a study that compared Google’s intelligence to ChatGPT. A “Communications experts” group evaluated both tools, scoring each AI platform against 40 tasks. The test showed that ChatGPT beat Google 23:16 with one draw. Google, however, has surpassed basic questions and queries in which information changes over time.

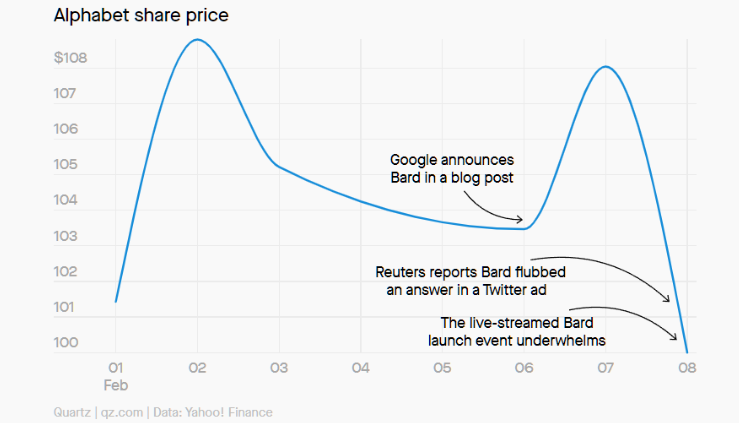

Google’s dilemma

But the skyrocketing popularity of ChatGPT prompted Google to rush to announce Bard, even though it wasn’t ready for a public release yet. The haste had its consequences – due to Bard’s mistake in the presentation on Twitter, the firm’s market value decreased by as much as $100 billion.

However, Google has its advantages. After the Bard is improved and configured, more than 1.1 billion users will be able to obtain unanimous access with much lower effort. It can be expanded in the existing search field. But here, Google will face a choice – if its advanced chatbot gives a detailed answer to any question, will this technology cannibalize the company’s profitable search advertising? After all, people will have fewer reasons to click on sponsored links.

Bottom Line

Admittedly, ChatGPT, Bard, and other AI chatbots, imperfect as they may be, will play an increasingly important role in shaping our digital world. But no matter how super-useful these chatbots are, they cannot replace a person. We believe that qualified people are the main value of any company.

By the by, we at Relevant have dozens of experts to help you stand out from competitors. As a trusted ML/AI software development company, we harness the potential of artificial intelligence to deliver new business solutions and perceptive experiences. We can integrate AI into your current infrastructure to increase customer engagement, eliminate human factors, and maximize profit. Our competencies also include:

- Natural language processing services

- AI&ML software and app development

- Advanced analytics solutions

- Smart assistants & AI chatbot development services

So contact us to augment your engineering teams with our AI experts and create software solutions that solve business problems in your sphere.